Explainable AI for Credit Risk

Dec 2024

View on GitHubThe Business Problem

Banks reject loan applications but can't explain why in terms customers can act on. "Your credit score is too low" doesn't help. "Insufficient income" doesn't say by how much.

This creates three problems:

- Regulatory risk: Regulators require explainability in automated lending decisions

- Customer retention: Rejected applicants don't know how to improve and take their business elsewhere

- Fair lending: Without transparency, bias is harder to detect and fix

The opportunity: Turn rejections into retention by showing customers exactly what needs to change.

Scale & Impact

Working with 148,670 loan applications (24.6% default rate), I built a system that not only predicts risk accurately but explains decisions in actionable terms.

For every 100 applications, 25 will default. Accurate prediction matters, but so does guiding rejected applicants toward future approval.

The Solution: Counterfactual Explanations

I built a neural network that generates counterfactual explanations - showing exactly what needs to change for approval.

Example: Current Status - REJECTED (82% default risk)

To reduce default risk below 20%, you need to:

- Reduce Loan-to-Value ratio from 95% to 80% (provide $15,000 larger down payment)

- Reduce Debt-to-Income ratio from 48% to 38% (pay down $800/month in debt)

- Extend loan term from 10 to 15 years (reduce monthly payment burden)

Result: APPROVED (18% default risk)

Instead of "application rejected," customers get a 6-12 month roadmap to approval.

Technical Approach

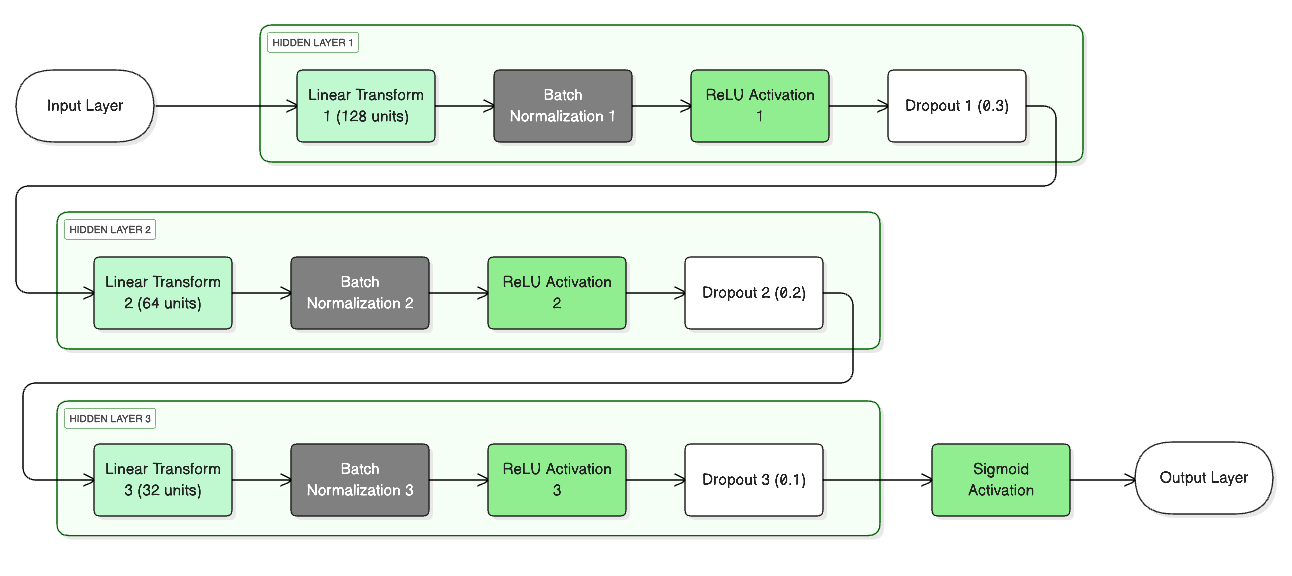

Built a 3-layer neural network (128 → 64 → 32 units, 19,649 parameters) optimized for credit risk. Used BatchNorm for training stability, ReLU for non-linear patterns, and Dropout to prevent overfitting.

I chose neural networks over tree models (XGBoost) because counterfactual generation requires gradient-based optimization. The slight accuracy tradeoff (0.5% AUC-ROC) is worth the explainability gain for regulatory compliance.

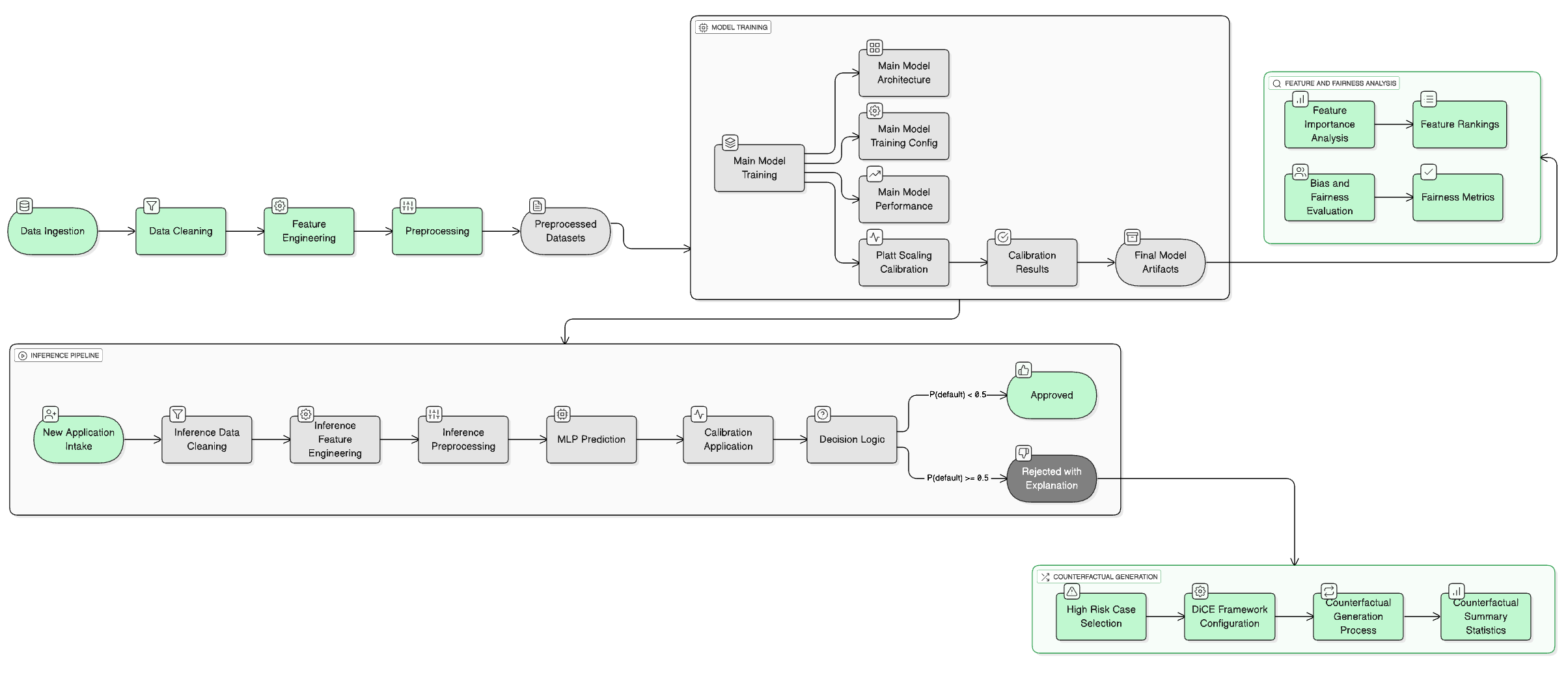

Complete System Architecture

End-to-end pipeline from data preprocessing through model training to counterfactual generation.

Neural Network Architecture

3-layer MLP with BatchNorm, ReLU activation, and Dropout for regularization.

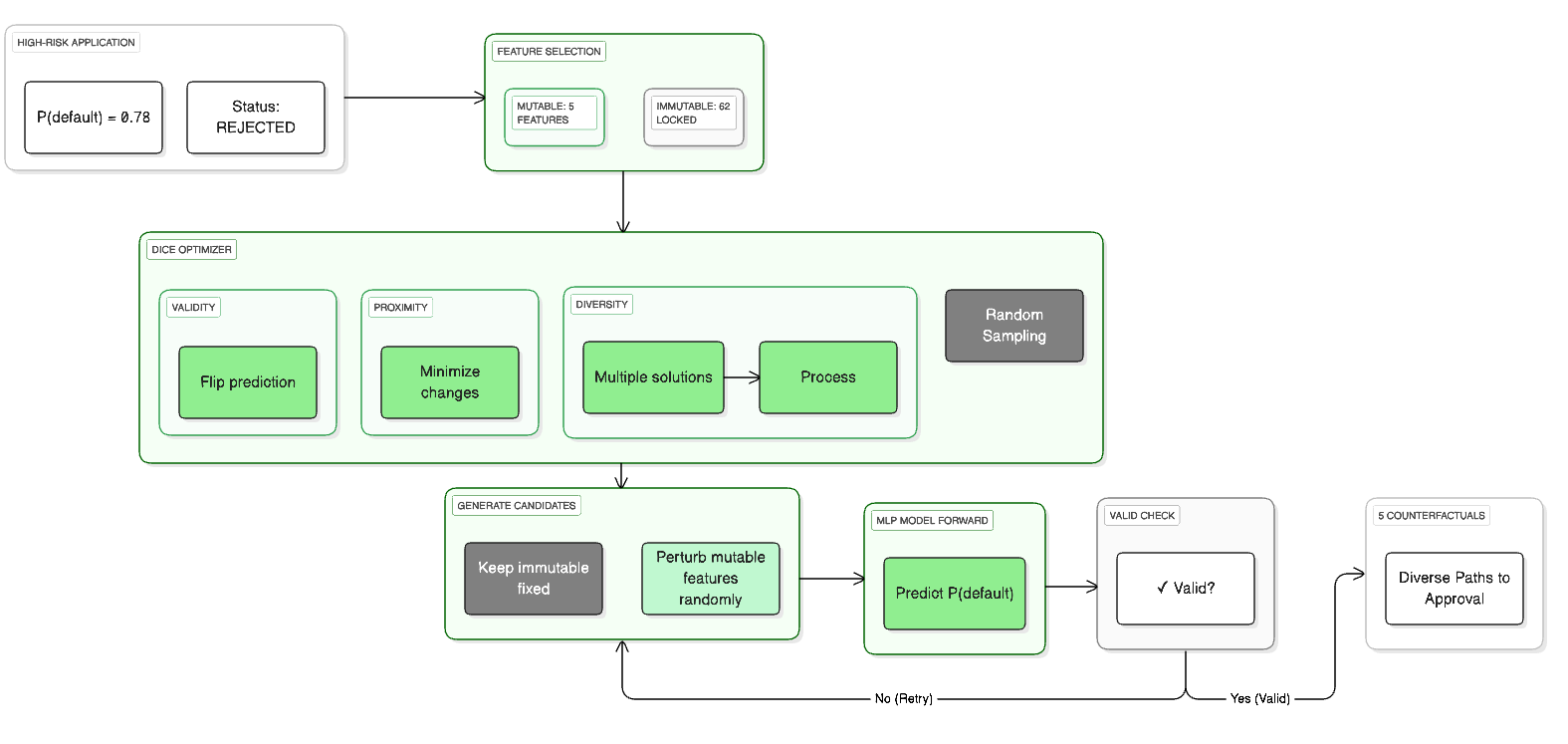

Counterfactual Generation Process

Gradient-based optimization finds minimal feature changes needed to flip prediction from rejection to approval.

Performance Metrics

- AUC-ROC: 0.888 - 88.8% chance of correctly ranking a defaulter as higher risk than non-defaulter

- Calibration Gap: 0.1% - When model predicts 30% default risk, actual rate is ~30%

- Brier Score: 0.088 - Well-calibrated probabilities for business decisions

Performance matches XGBoost (industry standard) while providing explanations tree models cannot generate.

Counterfactual Generation Results

Tested on 13 rejected applications to validate the system generates realistic, actionable guidance.

- 46 counterfactuals generated - Average 3.5 alternative paths per rejection

- 100% success rate - Every rejected application got actionable guidance

- 2.22 features modified on average - Changes are minimal and achievable

Most counterfactuals need just 2-3 changes, making recommendations realistic for customers to achieve within 6-12 months.

Most Actionable Features

Loan-to-Value, Debt-to-Income, and Term are the top levers. All are controllable by applicants through larger down payments, debt payoff, or loan term adjustments.

Fairness & Compliance

Tested across demographics to ensure fair lending compliance.

- Gender Disparate Impact: 1.021 - Near-perfect parity (1.0 = equal, regulatory range: 0.8-1.25)

- Calibration Gap: 2.3% max - Model equally accurate across age, gender, region

Disparate impact ratio of 1.021 is well within regulatory compliance (0.8-1.25), showing fair treatment across demographics.

This reduces regulatory risk and protects against discrimination lawsuits.

Business Impact

For Banks:

- Regulatory compliance: Automated explanations satisfy GDPR and fair lending requirements

- Customer retention: Rejected applicants get 6-12 month roadmap instead of permanent loss

- Operational efficiency: Customer service references automated explanations vs. custom responses

For Product Strategy:

- Product insights: 56.5% of rejections solvable through better debt-to-income ratios suggests offering debt consolidation services

- Segment targeting: Identify "near-miss" applicants who are one debt consolidation away from qualifying

- Cross-sell: Applicants needing better LTV ratios are candidates for savings products

Estimated value: For a bank with 50,000 annual applications (30% rejection rate), improving reapplication from 5% to 15% generates $2-5M in additional loan origination revenue.

What I Learned

In regulated industries, explainability is a product requirement, not an optional feature. Banks need explanations for regulators and to maintain customer relationships.

Not all explanations are useful. "Be 10 years younger" is technically valid but useless. The system only suggests changes customers can make within 6-12 months.

Fairness requires more than equal treatment. The model must be equally accurate across demographics, not just equally likely to approve. Calibration fairness is the higher standard.

Next Steps for Production:

- Cost-benefit analysis: Show customers the financial impact of each suggested change

- Multiple paths: Generate "quickest," "lowest cost," and "highest confidence" scenarios

- Progress tracking: Track applicant progress toward goals with 3, 6, 9 month check-ins

- A/B testing: Measure impact on retention and reapplication rates vs. standard rejections